PyTorch is a powerful machine learning framework that has taken the data science and developer communities by storm. Based on the Torch library, PyTorch is an open-source software that is free to use and has a wide range of applications, including computer vision and natural language processing.

Background Story

PyTorch was originally developed by Meta AI, which was later renamed Facebook AI Research (FAIR). The framework was publicly released in January 2017 and has since seen a rapid uptake in the data science and developer community. PyTorch is based on Torch, a scientific computing framework and script language that is in turn based on the Lua programming language. PyTorch has quickly become a popular choice for developers and researchers alike.

Target Customers

PyTorch is used by a wide range of customers, including professional services organizations, startups, and large enterprises. Some of the featured customers of PyTorch include:

- Lightricks

- Jasper

- Anthropic

- MosaicML

- Falkonry, and many others.

Funding, Capital Raised, Estimated Revenue

PyTorch is an open-source software and does not have a traditional funding model. However, PyTorch is backed by Facebook AI Research (FAIR), which provides resources and support for the development of the framework. As of 2021, there is no information available on the estimated revenue of PyTorch.

What is Cloudways? A Comprehensive View of Features, Competitors, Pros, Cons, and More

Products and Services

PyTorch is an end-to-end machine learning framework that enables fast, flexible experimentation and efficient production through a user-friendly front-end, distributed training, and an ecosystem of tools and libraries. PyTorch offers a range of products and services, including:

- PyTorch Lightning: A lightweight PyTorch wrapper for high-performance AI research.

- TorchServe: A model serving library for PyTorch models.

- TorchElastic: A library for elastic PyTorch training in Kubernetes.

- PyTorch Hub: A pre-trained model repository designed to facilitate research reproducibility.

- PyTorch Mobile: A framework for deploying PyTorch models on mobile devices.

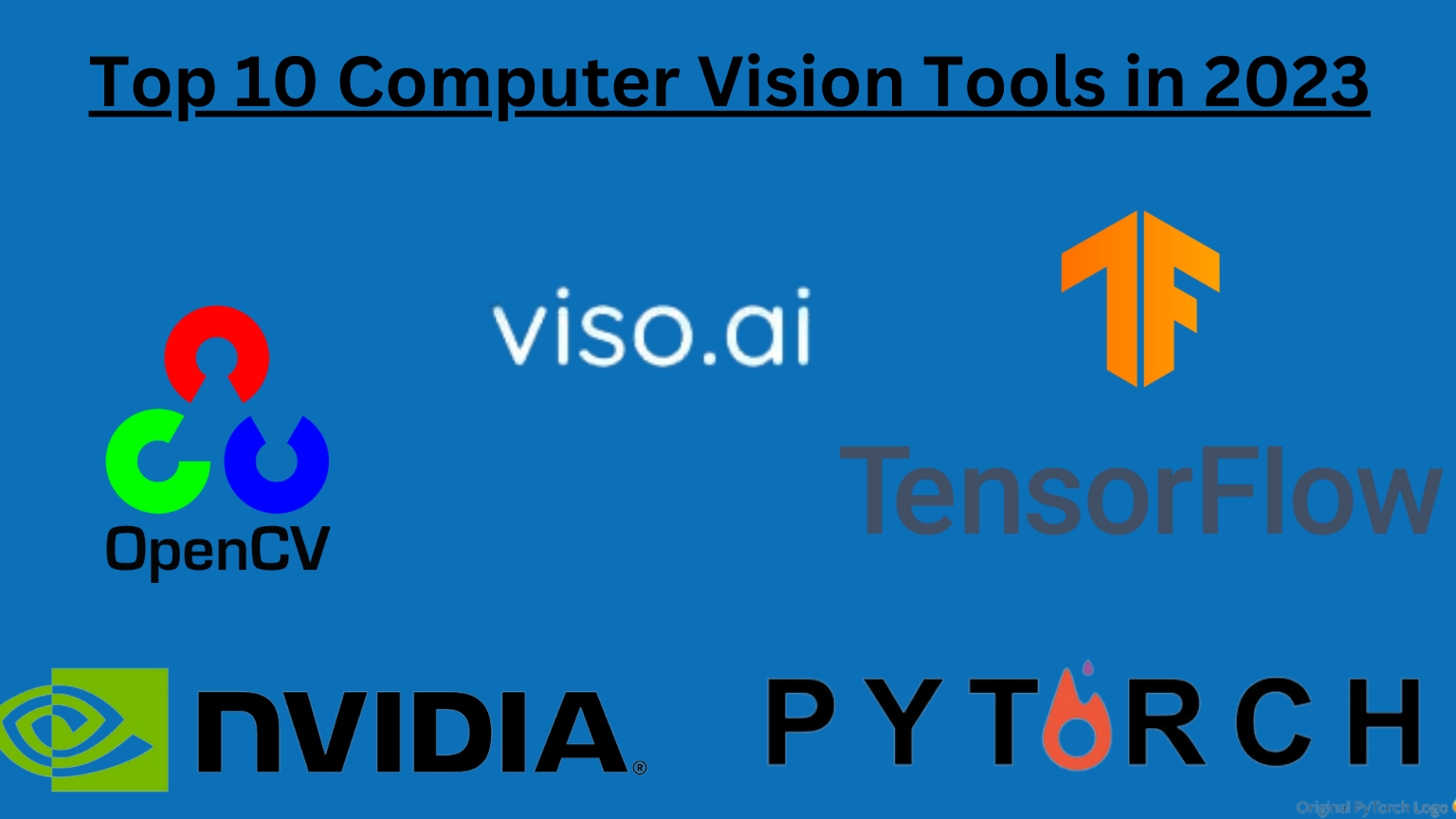

Competitors

PyTorch’s main competitors are:

Pros and Cons of PyTorch

Pros

- Deep Learning Framework: PyTorch is essential for generating tensors in ML models.

- GPU Compatibility: PyTorch is compatible with GPUs, allowing faster model training compared to CPU processing.

- Debugging and Numpy Integration: PyTorch is compatible with Numpy arrays, offers dynamic computation, and simplifies the debugging process.

- User-friendly: For many users, PyTorch is one of the easiest deep learning frameworks. It’s simple to define a model, set hyperparameters, and initiate training.

- Active Community: There is an active community around PyTorch. Issues often get resolved swiftly when posted online.

- Error Highlighting: PyTorch makes it easier for developers by highlighting errors, reducing the complexity seen in frameworks like TensorFlow.

- Distributed Data Parallelization: Offers control and efficiency with distributed data parallelization.

- Language Flexibility: PyTorch supports not only Python but also C++, hinting at future compatibility with faster compiling languages.

- Dynamic Graphing: PyTorch provides dynamic graphing, which gives it an edge over some other frameworks.

- Versatility: PyTorch’s way of integrating various layers and architectures makes it suitable for both R&D and production.

- Documentation and Simplicity: Many users commend the documentation and the simplicity of state-of-the-art implementations in PyTorch.

Cons

- Complex Functions: Some users find the functions and methods for Deep Learning in PyTorch hard to remember.

- Documentation: There are concerns about the documentation not being user-friendly, especially with updates to new versions.

- Visualization Tools: PyTorch lags in monitoring and visualization tools, especially when compared to TensorFlow’s TensorBoard.

- Performance Issues: Some users find that as applications grow in size, PyTorch’s processing speed decreases, impacting overall performance.

- Dataloader Inefficiency: There are reports of dataloaders being inefficient and causing bottlenecks.

- Scalability with Small Data: PyTorch is not optimal when training on a very small amount of data.

- Limited Online Support: Some users feel there is not enough online support for troubleshooting PyTorch issues.

- Issues with Large-scale Production: Despite its open-source nature, PyTorch can be ineffective for large-scale models in production.

- Deployment Challenges: Integrating into applications can be challenging, and deploying developed models on mobile platforms is difficult with PyTorch.

- Lack of Diversified Support: More diversified community support is desired by some users, especially in comparison to TensorFlow.

- Debugging Difficulties: For some, debugging in PyTorch becomes a significant issue, particularly when identifying the causes of errors.